Backlog updates

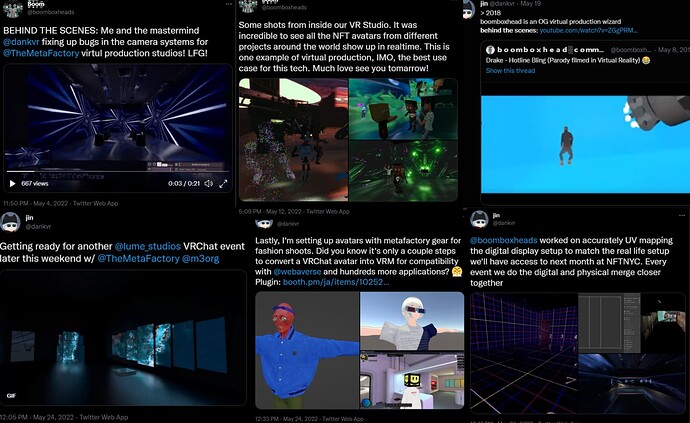

Apologies for the week gap, been in crunch mode preparing for the event and this thread has for awhile served as documentation that’ll come in handy for post production.

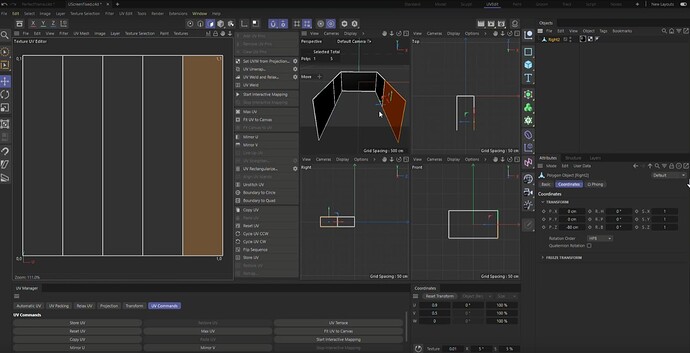

UV mapping

Previous notes (expand below)

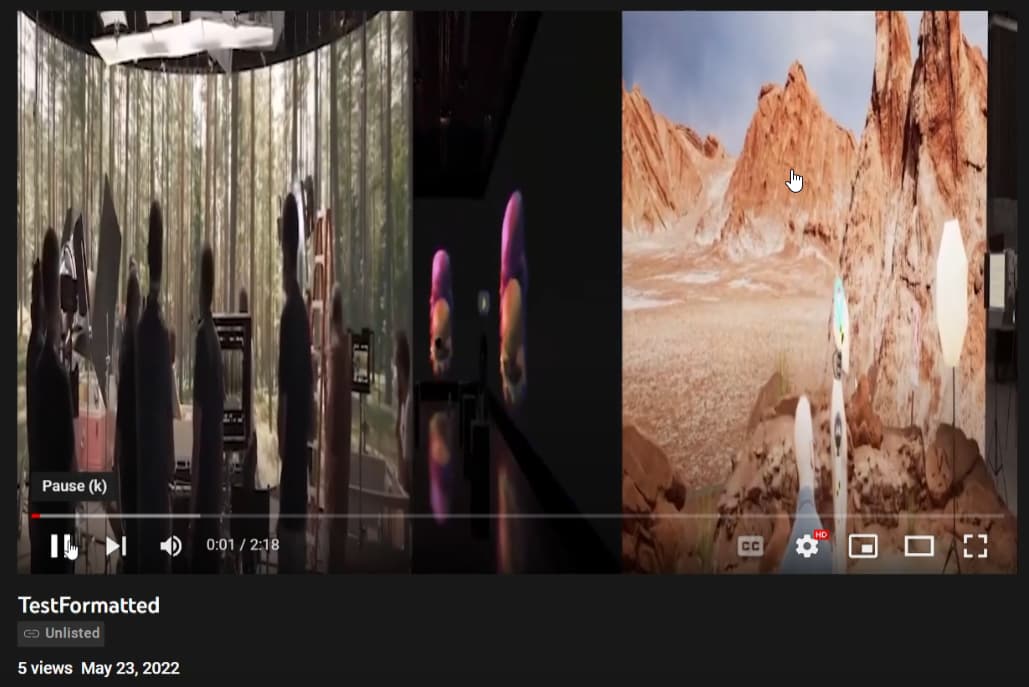

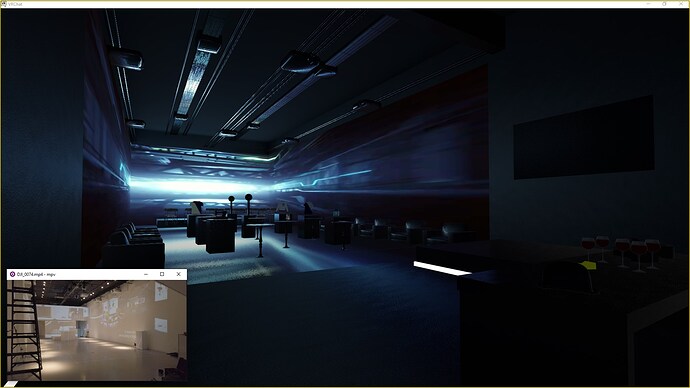

Just want to mention that this is how the video looks like when exporting into the VRChat version of lume studios. It’s 9600x1080 scrunched up into a 1080p video essentially (whatever youtube plays by default but if you self host it can default to a high resolution like 8k automatically).

Watch on YouTube: Lume Anamatic Rough 1 - YouTube

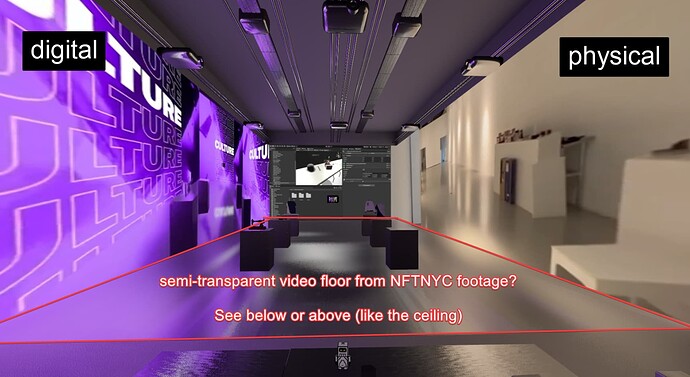

We’re able to pretty quickly preview the wall screens if all merged into one video. It looks cool when one side is content from the digital world and the other side is video from the physical event. We should lean into this setup for a VR encore event, or perhaps use the floor / ceiling to blur reality between them.

This UV map proposes that in the future instead of 1 render texture target we could have 1 per projector screen. There are certain limitations with the video players that we used that prevented us from doing such in VRchat.

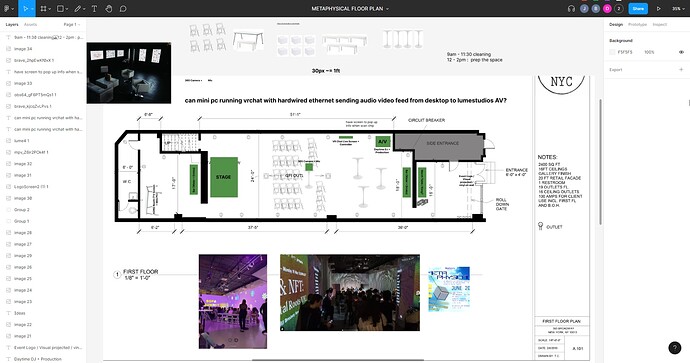

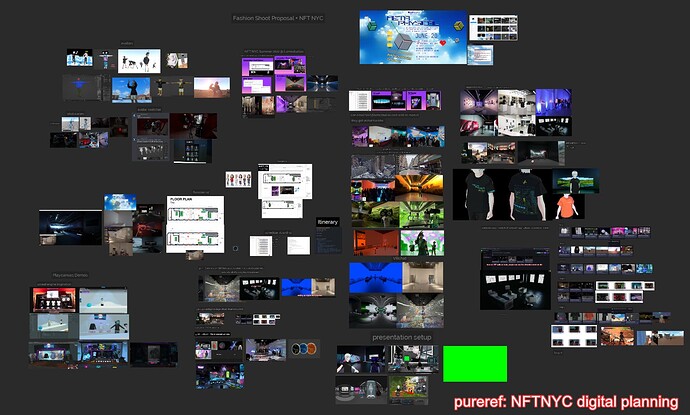

Planning

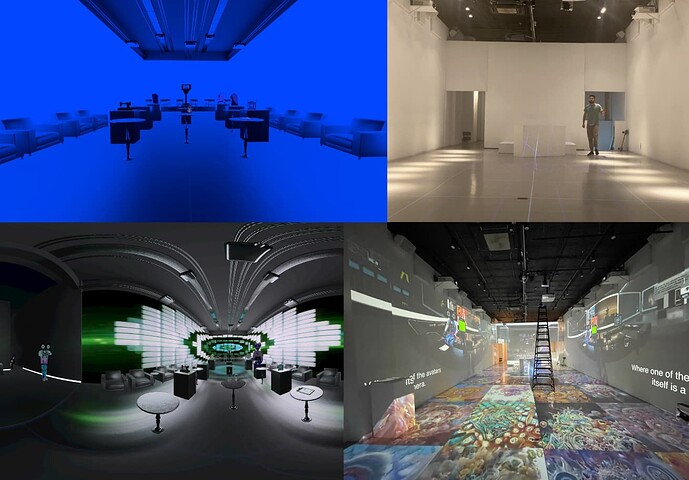

We all synced up on Figma and Zoom with the venue owner to do some floor planning of how the space might look throughout the main events.

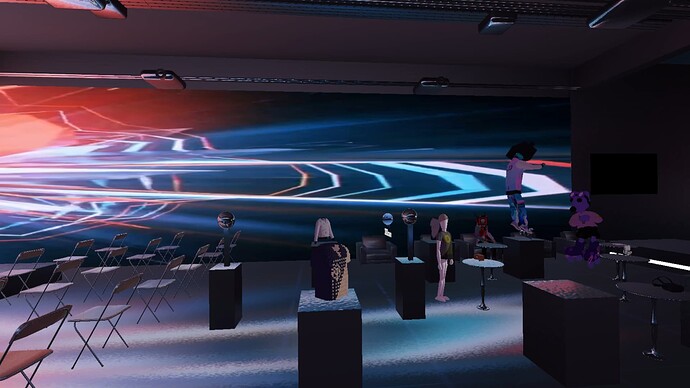

Boomboxhead then modeled out the VR space based on the floor plan to give us a preview of the layout spacing.

We added some props on top of the pedestals to create conversation pieces that help tell the narrative of what MetaFactory does from the physical to interoperable digital goods.

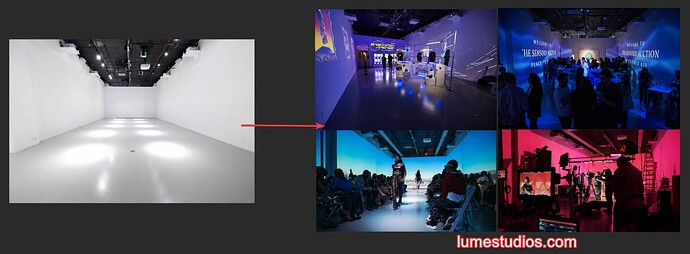

Here’s a preview of how the physical and the digital venue look next to each other on the morning of the event before building the physical event began.

Videos

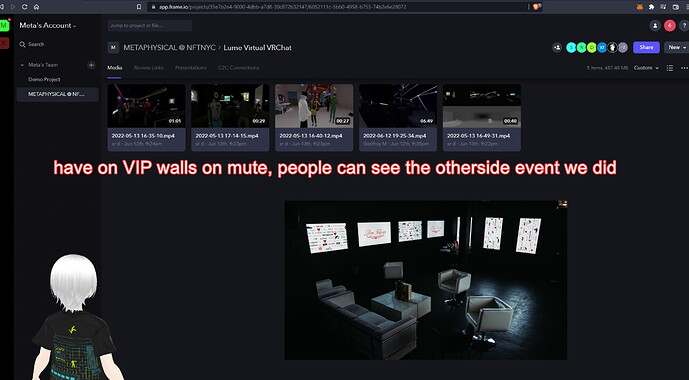

For much of the past couple weeks we focused heavily on producing video content for the event, down to the last second before the show. We’ve adopted Frame.io to upload and get rapid feedback which was really clutch. Frame was fast to comment and scrub through and integrated directly into adobe software for editing.

Sometimes while editing me and boomboxhead ran into space issues because of how big large resolution projects can get. Only later we found out that Lume uses VJ software like Resolume and supports NDI which gave us lots of power and flexibility on how we can display content onto the screens.

Overall we uploaded over 160gb of digital content to Frame. I recorded tons of 4k 360 video to see how they would look displayed in the physical venue, although I don’t think we got to use any for such purpose.

I recorded a few looped videos for the downstairs VIP blackroom screens:

MF Store

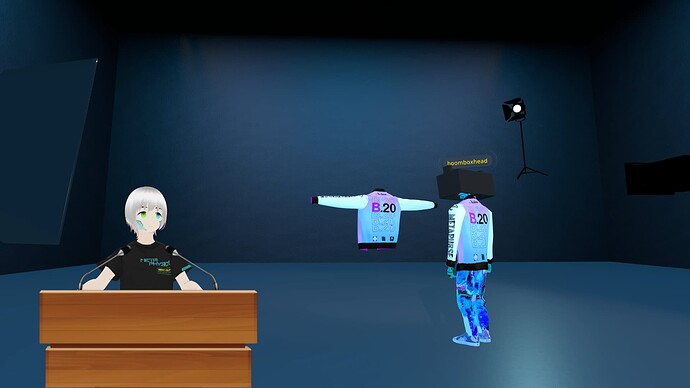

We wanted to pre-record some content as a plan B + a few practice rehearsals for presenting the vision. In one night Boomboxhead basically forked the MF shop project into something almost totally new / revamped.

Before

After

For a presentation we gathered in the lounge area. I was a 360 camera to capture in 360 from various angles (so we can test later how they look in Lume studio setup) while boomboxhead asked Drew (green screen) questions as DAOFREN and a guest cat joined us. Clips of this can go into a post-production documentary.

During production boomboxhead worked on a 3D model photo studio that was inside VR. With a hotkey we can switch between camera views and take pics / video from our desktop screens. More deets will be in a separate thread (fashion shoots related).